An entirely open-source AI code assistant inside your editor

May 31, 2024

This is a guest post from Ty Dunn, Co-founder of Continue, that covers how to set up, explore, and figure out the best way to use Continue and Ollama together.

Continue enables you to easily create your own coding assistant directly inside Visual Studio Code and JetBrains with open-source LLMs. All this can run entirely on your own laptop or have Ollama deployed on a server to remotely power code completion and chat experiences based on your needs.

To get set up, you’ll want to install

Once you have them downloaded, here’s what we recommend exploring:

Try out Mistral AI’s Codestral 22B model for autocomplete and chat

As of the now, Codestral is our current favorite model capable of both autocomplete and chat. This model demonstrates how LLMs have improved for programming tasks. However, with 22B parameters and a non-production license, it requires quite a bit of VRAM and can only be used for research and testing purposes, so it might not be the best fit for daily local usage.

a. Download and run Codestral in your terminal by running

ollama run codestral

b. Click on the gear icon in the bottom right corner of Continue to open your config.json and add

{

"models": [

{

"title": "Codestral",

"provider": "ollama",

"model": "codestral"

}

],

"tabAutocompleteModel": {

"title": "Codestral",

"provider": "ollama",

"model": "codestral"

}

}

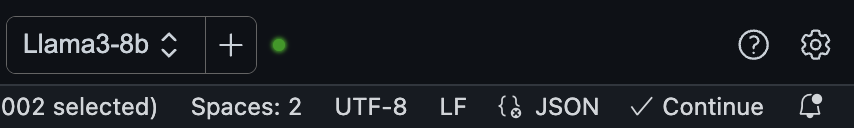

Use DeepSeek Coder 6.7B for autocomplete and Llama 3 8B for chat

Depending on how much VRAM you have on your machine, you might be able to take advantage of Ollama’s ability to run multiple models and handle multiple concurrent requests by using DeepSeek Coder 6.7B for autocomplete and Llama 3 8B for chat. If your machine can’t handle both at the same time, then try each of them and decide whether you prefer a local autocomplete or a local chat experience. You can then use a remotely hosted or SaaS model for the other experience.

a. Download and run DeepSeek Coder 6.7B in your terminal by running

ollama run deepseek-coder:6.7b-base

b. Download and run Llama 3 8B in another terminal window by running

ollama run llama3:8b

c. Click on the gear icon in the bottom right corner of Continue to open your config.json and add

{

"models": [

{

"title": "Llama 3 8B",

"provider": "ollama",

"model": "llama3:8b"

}

],

"tabAutocompleteModel": {

"title": "DeepSeek Coder 6.7B",

"provider": "ollama",

"model": "deepseek-coder:6.7b-base"

}

}

Use nomic-embed-text embeddings with Ollama to power @codebase

Continue comes with an @codebase context provider built-in, which lets you automatically retrieve the most relevant snippets from your codebase. Assuming you have a chat model set up already (e.g. Codestral, Llama 3), you can keep this entire experience local thanks to embeddings with Ollama and LanceDB. As of now, we recommend using nomic-embed-text embeddings.

a. Download nomic-embed-text in your terminal by running

ollama pull nomic-embed-text

b. Click on the gear icon on the bottom right corner of Continue to open your config.json and add

{

"embeddingsProvider": {

"provider": "ollama",

"model": "nomic-embed-text"

}

}

c. Depending on the size of your codebase, it might take some time to index and then you can ask it questions with important codebase sections automatically being found and used in the answer (e.g. “@codebase what is the default context length for Llama 3?”)

Fine-tune StarCoder 2 on your development data and push it to the Ollama model library

When you use Continue, you automatically generate data on how you build software. By default, this development data is saved to .continue/dev_data on your local machine. When combined with the code that you ultimately commit, it can be used to improve the LLM that you or your team use (if you allow). For example, you can use accepted autocomplete suggestions from your team to fine-tune a model like StarCoder 2 to give you better suggestions.

a. Extract and load the “accepted tab suggestions” into Hugging Face Datasets

b. Use Hugging Face Supervised Fine-tuning Trainer to fine-tune StarCoder 2

Learn more about Ollama by using @docs to ask questions with the help of Continue

Continue also comes with an @docs context provider built-in, which lets you index and retrieve snippets from any documentation site. Assuming you have a chat model set up already (e.g. Codestral, Llama 3), you can keep this entire experience local by providing a link to the Ollama README on GitHub and asking questions to learn more with it as context.

a. Type @docs in the chat sidebar, select “Add Docs”, copy and paste “https://github.com/ollama/ollama” into the URL field, and type “Ollama” into the title field

b. It should quickly index the Ollama README and then you can ask it questions with important sections automatically being found and used in the answer (e.g. “@Ollama how do I run Llama 3?”)

Join our Discord!

Now that you have tried these different explorations, you should hopefully have a much better sense of what is the best way for you to use Continue and Ollama. If you ran into problems along the way or have questions, join the Continue Discord or the Ollama Discord to get some help and answers.